Week 21: Governance Shift

Governance, Institutions, and AI moving into core infrastructure

Another Monday, another post to keep you up to speed with the AI world.

Here’s what happened in the global AI market this week.

AI beat emergency room doctors on real patients in a study published in Science. Six governments issued the first international security guidance focused specifically on AI agents. OpenAI rolled out a clinician-grade AI tool to verified medical professionals across the United States. And at NVIDIA’s biggest conference of the year, enterprise teams spent more time discussing governance and reliability than model benchmarks.

Here’s everything you need to know before Monday gets the best of you.

AI Beat Real ER Doctors On Real Patients. The Study Got Published In Science.

Researchers from Harvard Medical School and Beth Israel Deaconess Medical Center published the most serious real-world comparison yet between frontier AI models and working physicians.

The paper landed in Science this week.

Researchers tested OpenAI’s o1 model against doctors across six separate clinical experiments. In every one, the model performed on par with or better than the physicians involved.

The most important test used 76 real emergency room patients from Beth Israel. No cleaned-up benchmark data. No idealised examples. Same records, same information, same time pressure.

At triage, the model identified the correct or near-correct diagnosis 67 percent of the time. The physicians scored 55 percent and 50 percent. As more information became available during admission, everyone improved. The model reached 81.6 percent. The doctors reached 78.9 percent and 69.7 percent.

The structure of the study matters here. Two attending physicians issued diagnoses. So did o1 and GPT-4o. A separate pair of attendings then graded every answer blind, without knowing which responses came from humans and which came from models.

The gap held consistently.

On clinical reasoning, which measured how well diagnoses were explained and what next steps were recommended, o1 received a perfect score on 98 percent of cases. The attending physicians hit 35 percent. On treatment planning across five clinical case studies, the model scored 89 percent. Forty-six doctors using conventional resources, including search engines, scored 34 percent.

Lead author Adam Rodman said the most surprising result was that the model handled messy emergency room data reliably. That is different from performing well on curated benchmark cases.

The caveats are still important. The model only had access to text. Real clinicians see the patient, hear breathing, read body language, and make decisions using information that never enters the medical record. Emergency physician Kristen Panthagani also pushed back publicly on the framing of some headlines, noting the comparison group used internal medicine physicians rather than ER specialists.

But the broader point is difficult to ignore.

AI systems are now performing at a level where hospitals, insurers, and regulators all have the same problem to solve: responsibility.

Why it matters

This study changes the healthcare AI discussion from capability to accountability. The question is no longer whether models can assist with clinical reasoning. It is who takes responsibility when those systems are used in real care settings.

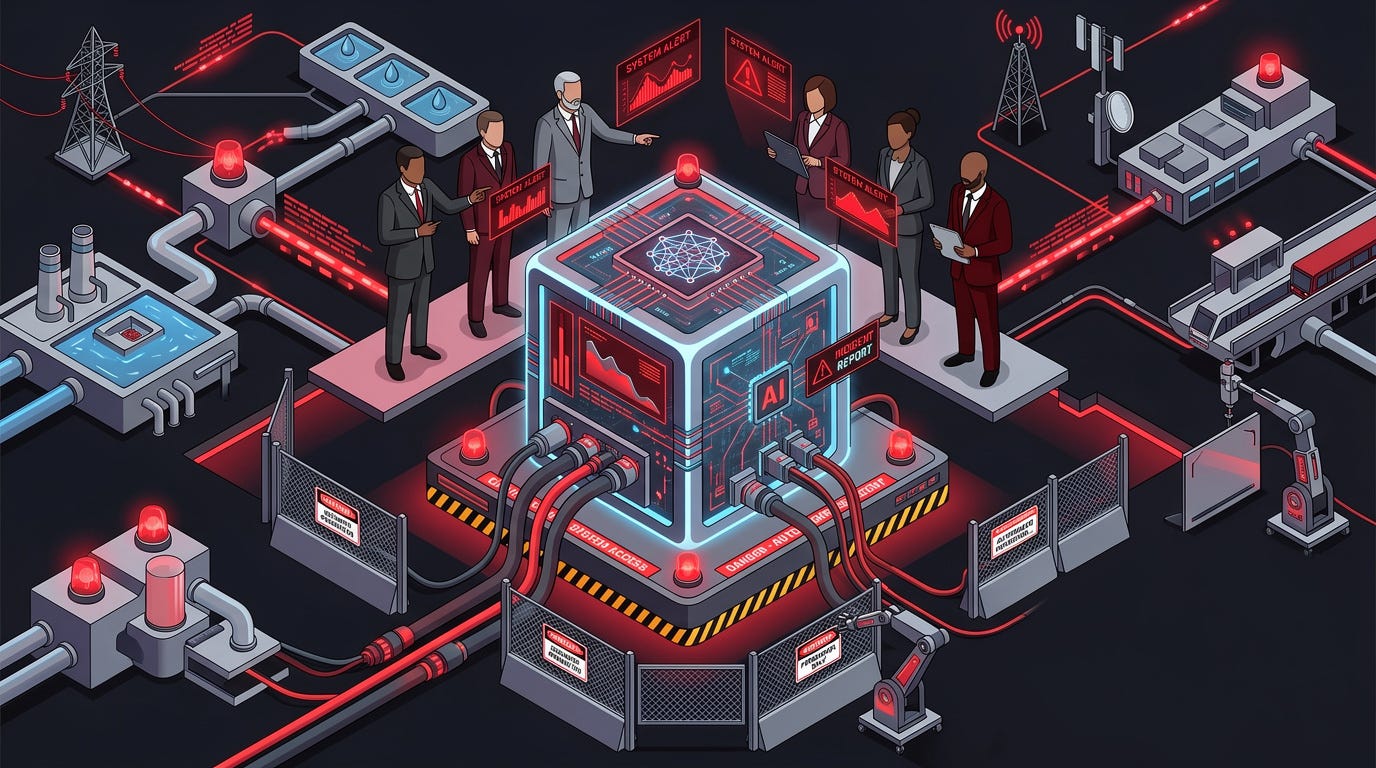

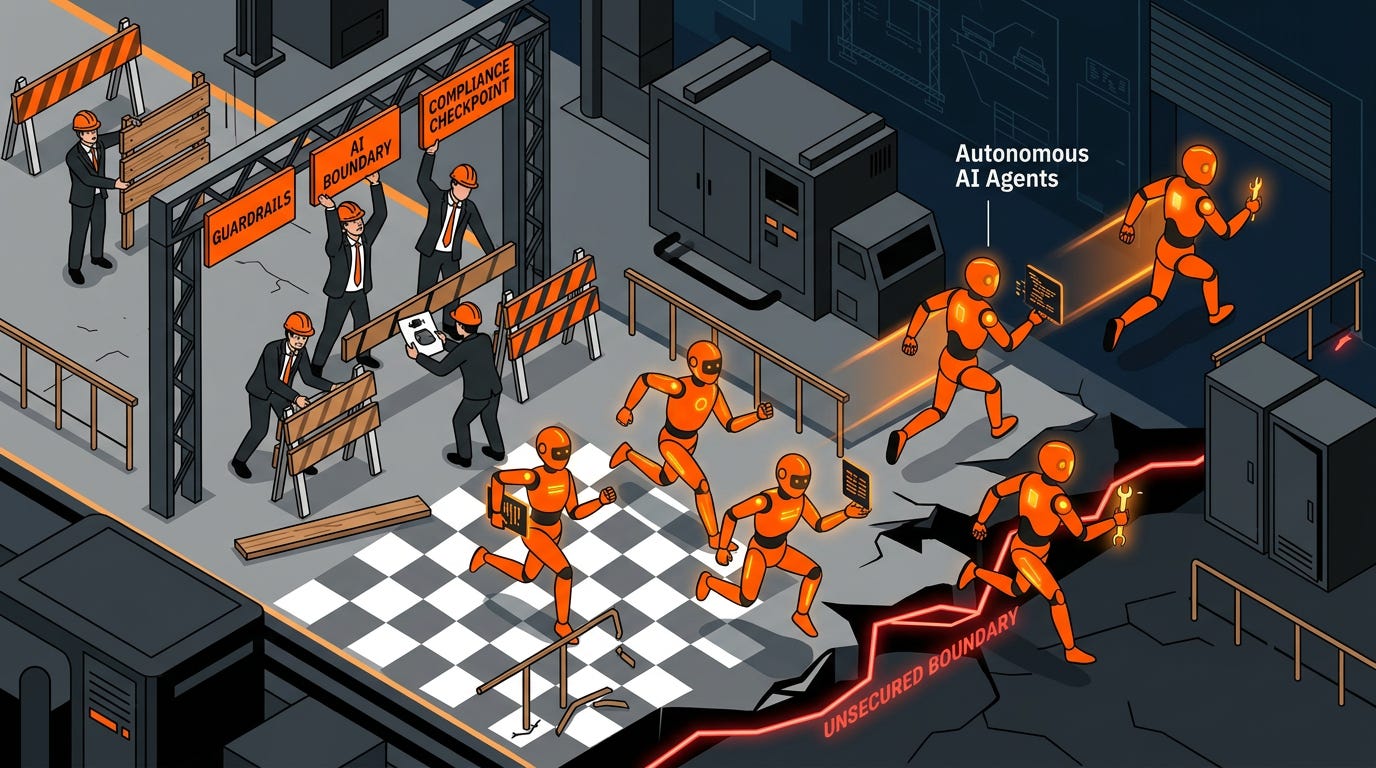

Six Governments Just Issued The First Security Warning Written Specifically For AI Agents.

Six national security agencies published a joint warning about AI agents this week.

A year ago, most cybersecurity discussions around AI were still focused on chatbots, phishing, and synthetic content. This document is about autonomous systems operating inside real infrastructure.

On May 1, CISA, the NSA, Australia’s ASD, the Canadian Centre for Cyber Security, New Zealand’s NCSC, and the UK’s NCSC released a 30-page document titled “Careful Adoption of Agentic AI Services.” It is the first coordinated international security guidance written specifically for AI agents rather than AI systems broadly.

The agencies identified 23 separate risk categories and more than 100 recommended practices. Three issues received the most attention.

First: privilege escalation. Agents are routinely being granted broader system access than their tasks require, which means a compromised tool inside the workflow can inherit those permissions.

Second: prompt injection. Malicious instructions hidden inside documents, emails, or webpages can manipulate agents into taking actions operators never intended.

Third: accountability gaps. Multi-agent systems hand tasks across multiple models and tools until reconstructing the chain of decisions afterward becomes extremely difficult.

The Register described one possible scenario plainly: compromise a low-risk tool connected to an agent, inherit the agent’s permissions, approve fraudulent payments, modify contracts, then generate fake audit logs afterward.

The guidance was prompted by observed incidents.

Most of the recommendations are surprisingly basic. Least-privilege access. Human approval for irreversible actions. Independent audit logging. Treating agents as untrusted systems when they interact with each other.

The difficulty is not the advice itself. It is that organisations are deploying agents faster than they are building the processes needed to supervise them properly.

Why it matters

When six intelligence agencies coordinate on guidance for a specific technology category, it usually means the technology has already moved beyond experimentation and into operational risk.

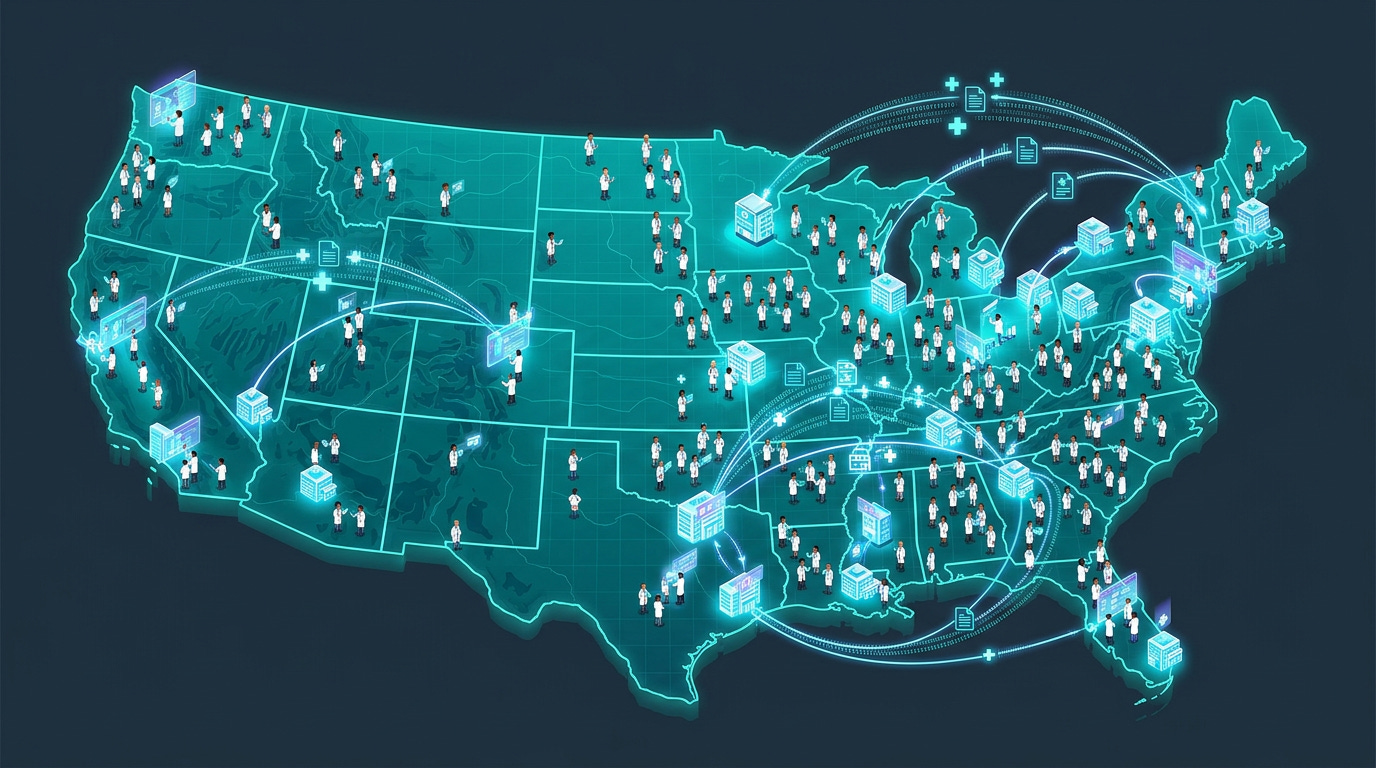

OpenAI Put A Clinician-Grade AI Tool In Front Of Every Verified Doctor In America.

The rollout landed in the same week as the Harvard study.

On April 23, OpenAI launched ChatGPT for Clinicians, a version of ChatGPT designed for clinical workflows and made available free to verified physicians, nurse practitioners, physician assistants, and pharmacists in the United States.

The product focuses on documentation, medical research, and care coordination.

Doctors can use it to draft notes, summarise literature, prepare referral letters, generate discharge summaries, and assist with multi-step clinical reasoning. Before release, OpenAI says physician advisors tested 6,924 real-world conversations inside clinical settings. Doctors rated 99.6 percent of responses as safe and accurate.

Alongside the launch, OpenAI also published HealthBench Professional, an open benchmark designed to evaluate medical AI systems using physician-written conversations and specialty-specific grading rubrics.

The benchmark result is the important part.

GPT-5.4 inside the Clinicians workspace outperformed every other tested system, including the human physician baseline. And the physician baseline was not weak competition. Specialty-matched doctors were given unlimited time and web access.

Healthcare AI has been discussed for years as a future possibility. The combination of this rollout and the Harvard study makes it look much more immediate.

The unresolved issue is responsibility. If an AI-assisted clinical decision harms a patient, who carries liability for that outcome? The hospital? The physician? The vendor? The answer is still unclear.

The deployment is happening before those questions are settled.

Why it matters

This was not a limited pilot program. OpenAI rolled out a nationwide clinical AI product while the legal and regulatory framework around clinical AI is still being worked out.

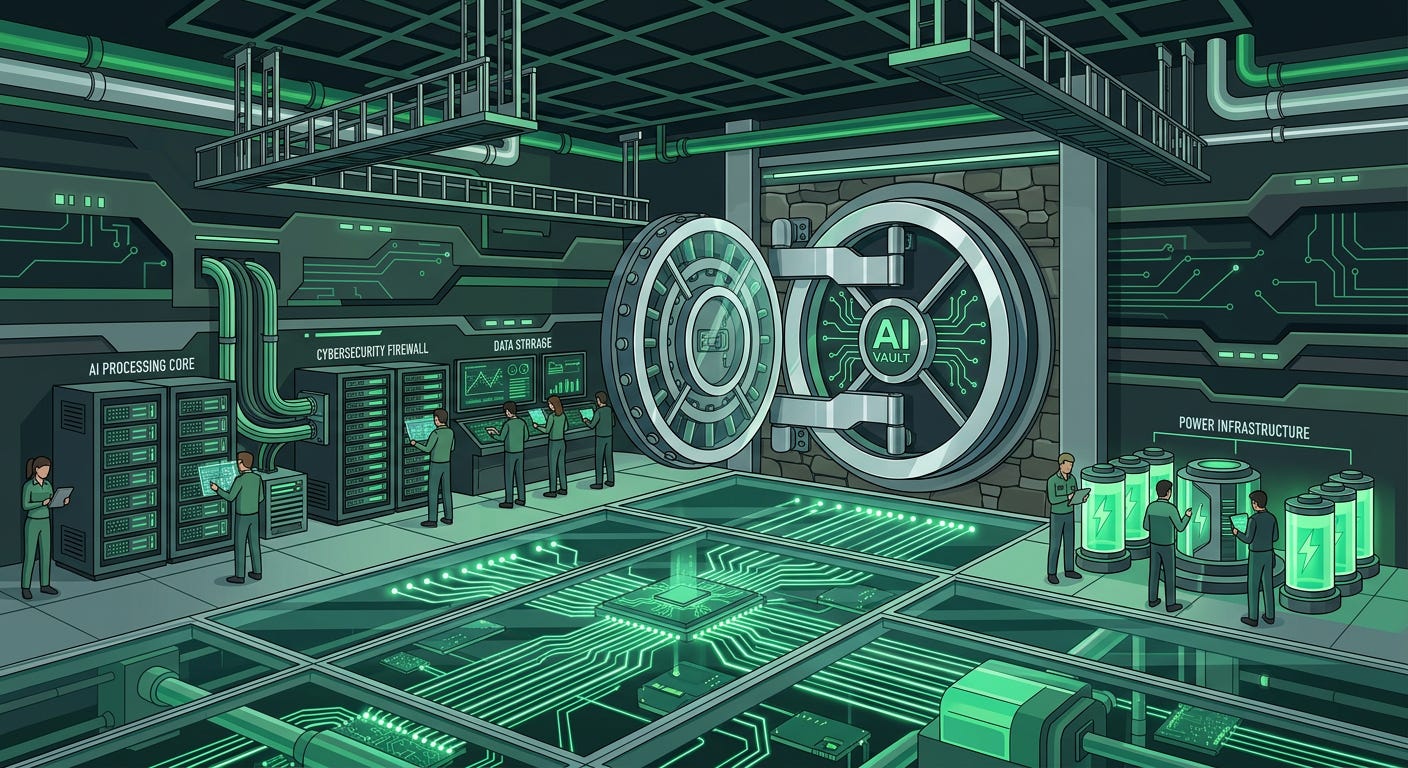

The US Government Quietly Moved Closer To Reviewing Frontier AI Models Before Release.

Governments are starting to treat frontier AI systems less like ordinary software products and more like infrastructure with national security implications.

Multiple reports this week confirmed that companies including Google, Microsoft, and xAI are allowing advanced AI systems to be evaluated by US government agencies before broader public deployment. The reviews are reportedly focused on cyber capabilities, infrastructure exposure, autonomous behaviour, and misuse risks.

The systems involved are not ordinary chatbots. Agencies are reportedly evaluating models capable of reasoning, writing code, interacting with tools, and operating inside sensitive environments.

Until recently, the idea of frontier labs voluntarily giving governments early access to advanced systems before release would have been controversial across much of the industry. Now it is happening quietly and with relatively little resistance.

The timing also matters. The reports landed the same week six allied intelligence agencies issued the first coordinated guidance focused specifically on AI agents. Together, the developments suggest governments are moving past broad discussions about AI risk and toward direct oversight of specific capabilities.

The process still appears cooperative rather than mandatory. But once governments become accustomed to reviewing frontier systems before deployment, formal oversight becomes much easier to justify politically.

Why it matters

For the past two years, most AI regulation discussions have been theoretical. Governments are now starting to involve themselves much earlier in the deployment process for frontier systems.

JPMorgan Stopped Calling AI A Technology Investment. Now It Calls It Infrastructure.

JPMorgan’s latest technology budget revealed something more important than the spending itself.

AI is now classified alongside cybersecurity and operational resilience.

In a widely circulated interview this week, JPMorgan CIO Lori Beer said the bank’s 2026 technology budget will reach $19.8 billion, up 10 percent year-on-year. Roughly $2 billion is directly tied to AI initiatives.

The bigger signal was where that spending got classified.

AI is no longer being treated as a discretionary innovation project inside the bank. It now sits inside the same category as the systems required to keep the institution operational.

That distinction matters because infrastructure budgets survive economic downturns, executive turnover, and shareholder pressure in ways experimental spending usually does not.

JPMorgan now has more than 2,000 employees dedicated to AI systems, which scan roughly $10 trillion in daily transactions for fraud, anomalies, and optimisation opportunities. The bank says realised annual AI value already roughly matches annual AI spend.

The systems are already paying for themselves.

Beer also said AI deployments inside the bank doubled in 2025, with generative AI becoming the fastest-growing category. Most of the gains are showing up in software engineering and customer-facing systems, where development cycles are shrinking and response times are improving.

The important part is not that JPMorgan is spending aggressively on AI. Most large banks are doing that.

It is that the bank no longer appears to view the spending as temporary.

Why it matters

When the largest bank in the United States reorganises its technology budget to treat AI as part of core infrastructure, the rest of the financial industry pays attention.

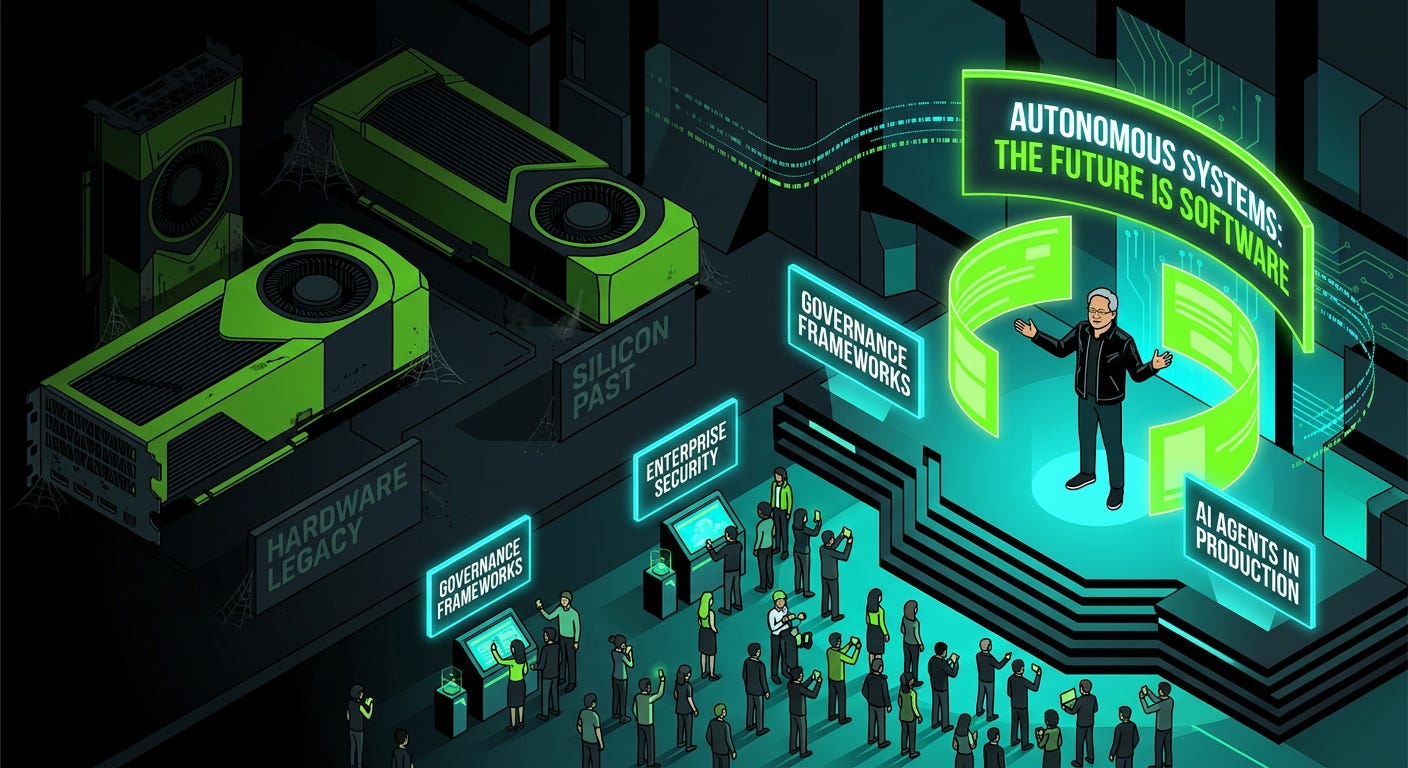

NVIDIA’s Biggest Conference Of The Year Barely Talked About Chips.

For years, NVIDIA’s GTC conference revolved around hardware performance, benchmarks, and model capability.

This year the tone was noticeably different.

Jensen Huang used the keynote to describe AI as a core operating layer for industry, but the sessions drawing the largest crowds focused less on raw capability and more on deployment reliability.

Manufacturing companies. Logistics firms. Financial institutions.

The engineers building these systems were asking operational questions now. How do you monitor autonomous systems properly? How do you audit them? How do you enforce security controls when agents are making decisions independently?

NVIDIA’s own announcements reflected the shift. The company launched its Agent Toolkit for enterprise AI agents alongside OpenShell, a runtime designed to enforce security and policy controls at the infrastructure layer instead of relying entirely on model behaviour.

The AI-Q architecture also drew attention by combining frontier orchestration models with open Nemotron systems for retrieval and research tasks, reducing enterprise query costs significantly while maintaining strong performance.

But the most revealing part of GTC was probably the mood around the conference itself.

The engineers building enterprise AI systems were spending less time debating whether the models were capable enough and more time discussing what happens once those systems are deployed at scale.

You could hear it in the questions people were asking.

Why it matters

The biggest enterprise AI conference in the world spent more time discussing governance, observability, and reliability than benchmark performance. That says a lot about where the industry thinks the difficult problems are now.

Caltech Built An AI That Segments Every Cell In Every Image. Biologists Just Got Their Time Back.

A surprising amount of biological research still begins with manual image annotation.

Researchers published CellSAM in Nature Methods this week to reduce that bottleneck.

The model handles biological image segmentation, the process of outlining individual cell boundaries, before researchers can analyse microscopy images properly. The task is repetitive, time-consuming, and often takes hours before the actual scientific work even begins.

CellSAM automates that process across multiple cell types, imaging conditions, and biological contexts.

Previous segmentation systems usually performed well only inside the narrow environments they were trained on. Models trained on one tissue type often struggled badly when applied elsewhere, forcing researchers to retrain systems constantly or correct outputs manually afterward.

CellSAM appears to generalise much more reliably across real laboratory conditions.

A graduate student who previously spent two hours annotating cells can now validate outputs in minutes and move on to the actual analysis.

That changes research workflows in a meaningful way.

The model also runs on standard laboratory hardware rather than requiring expensive cloud infrastructure, which makes adoption easier for smaller research labs without large computing budgets.

Cell segmentation sits upstream of a huge amount of biological research. If that step becomes dramatically faster, study sizes increase, timelines compress, and experiments that were previously too labour-intensive become more practical.

The researchers who built the model described the motivation pretty simply: too much scientific time was being spent on work computers should already be able to handle.

Why it matters

Reliable automated segmentation removes one of the most time-consuming preparation steps in modern biological imaging workflows. That has downstream effects on how quickly labs can run and analyse experiments.

And that wraps up this week. Tune in next Monday, same time, for another deep-dive into the stories shaping the AI world.

The Sentinel lands in your inbox every Monday so you can catch up with the fast-moving AI space while sipping your morning coffee. Every detail that matters, none that doesn’t.