Week 20: Breaking Control

Open Clouds, Autonomous Agents, and Billions Backing The Next Phase

Another Monday, another post to keep you up to speed with the AI world.

Here’s what happened in the global AI market this week.

Microsoft’s exclusive grip on OpenAI ended. Google put $40 billion behind its biggest AI rival. Coding agents got the ability to buy domains, provision servers, and ship software without asking anyone. And the trial that could rewrite how AI companies are allowed to exist finally opened in Oakland.

Here’s everything you need to know before Monday gets the best of you.

Microsoft’s Hold On OpenAI Is Over, And The Cloud War Just Opened Up

On April 27, Microsoft and OpenAI announced they had torn up the exclusivity clause that had bound OpenAI’s commercial products to Azure since before ChatGPT launched. The renegotiated deal gives Microsoft a non-exclusive licence to OpenAI’s IP through 2032, caps Microsoft’s revenue share, and eliminates the AGI clause that would have changed their commercial relationship the moment artificial general intelligence was declared. OpenAI is now free to run its products on any cloud. Within 24 hours, it was on Amazon Web Services.

AWS CEO Matt Garman held an event in San Francisco on April 28 to launch OpenAI models on Amazon Bedrock, framing it as meeting years of suppressed demand. “Their production applications run in AWS. Their data is in AWS. We’ve forced them to get great OpenAI models, to go to other places.” That forced detour is over. OpenAI CEO Sam Altman appeared at the event via recorded video, noting that his schedule had been “taken away” from him for the day, a reference to the Musk trial opening across the Bay Bridge in Oakland. The new product is called Amazon Bedrock Managed Agents, powered by OpenAI, combining OpenAI’s models with AWS’s Trainium infrastructure, with OpenAI committing roughly 2 gigawatts of Trainium capacity for training. Google Cloud is already in advanced talks for the same arrangement, targeting Q4 2026. GPT-5.5, which shipped on April 23 and which we talked about on The Sentinel last week, is already available on Bedrock, alongside Codex and the full model suite.

The internal OpenAI memo that surfaced this week, written by chief revenue officer Denise Dresser, is the most revealing part of the story. She wrote that the Microsoft partnership had “limited our ability to meet enterprises where they are” and that inbound demand for the AWS offering had been “frankly staggering.” That’s harsh language for a company that willingly stayed exclusive for three years. The deal was a constraint. Removing it unlocks enterprise customers who spent the past year asking why they couldn’t get OpenAI models inside the infrastructure they already run. Well, now they can. And AWS, which already carries Anthropic’s Claude and Meta’s Llama family, has quietly become the most comprehensive AI model marketplace in existence.

Why it matters

Every enterprise that had Azure as its primary reason to avoid OpenAI’s models lost that excuse this week. AWS now carries OpenAI and Anthropic under the same roof. That is a different competitive landscape than the one that existed seven days ago.

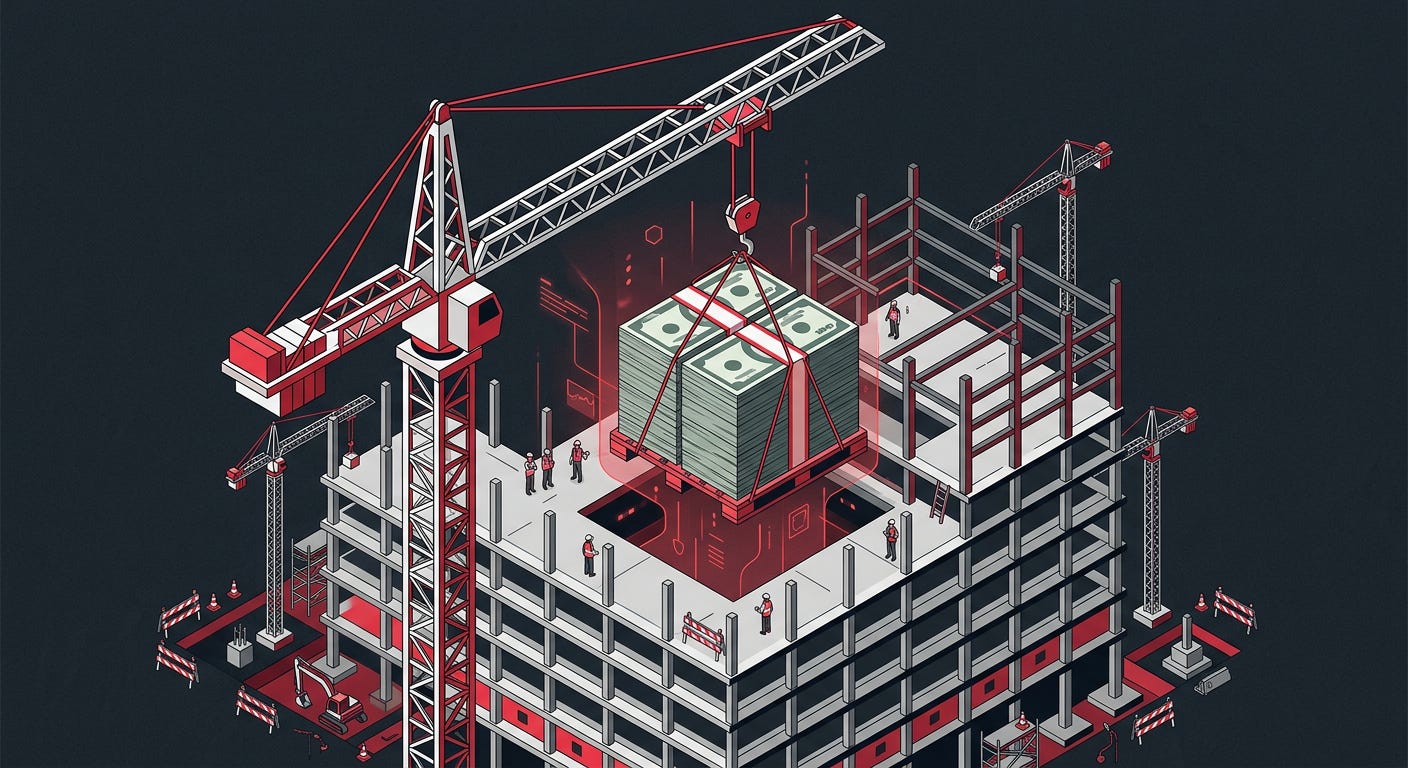

Google Is Betting $40 Billion On Anthropic While Also Competing With It

Google confirmed on April 24 that it is committing up to $40 billion to Anthropic, starting with a $10 billion investment at a $380 billion valuation, with the remaining $30 billion contingent on performance milestones. This is not a new relationship. Google has been invested in Anthropic since its earliest funding rounds. But the scale is different this time. The deal pairs the cash commitment with a fresh five gigawatts of computing capacity from Google Cloud over five years, adding to a previously announced 3.5 gigawatt TPU arrangement with Google and Broadcom from 2027. Anthropic now has more committed compute than any other frontier lab outside of the hyperscalers themselves.

The $10 billion lands immediately. The other $30 billion arrives only if Anthropic hits specific operational and financial milestones Google has not publicly named. That structure matters. At a $380 billion valuation, getting $30 billion conditional rather than committed reflects the risk of investing in a company that is also competing directly against your core search and AI products. Google needs Anthropic to be competitive enough to stay at the frontier, but not so dominant that Claude makes Google’s own AI efforts irrelevant. The five-gigawatt compute commitment is the part that solves a concrete problem: Anthropic can now train the next two generations of models without infrastructure problems.

OpenAI went multi-cloud the same week, with its models landing on AWS and Google Cloud talks already underway. A search giant that hosts both of its main AI competitors while also investing $40 billion in one of them. This game is far more complex than it appears. Google’s bet is that controlling the infrastructure layer is worth more than backing any single model. If AWS becomes the distribution platform for every frontier model, Google loses, regardless of how good Gemini is. Keeping Anthropic well-capitalised and compute-secure is part of how Google stays relevant in the infrastructure race it cannot afford to lose.

Why it matters

Five gigawatts of compute paired with $40 billion in capital is the largest infrastructure commitment any investor has made to a frontier AI lab. Anthropic won’t be struggling for compute for a long time. That changes what it can build next.

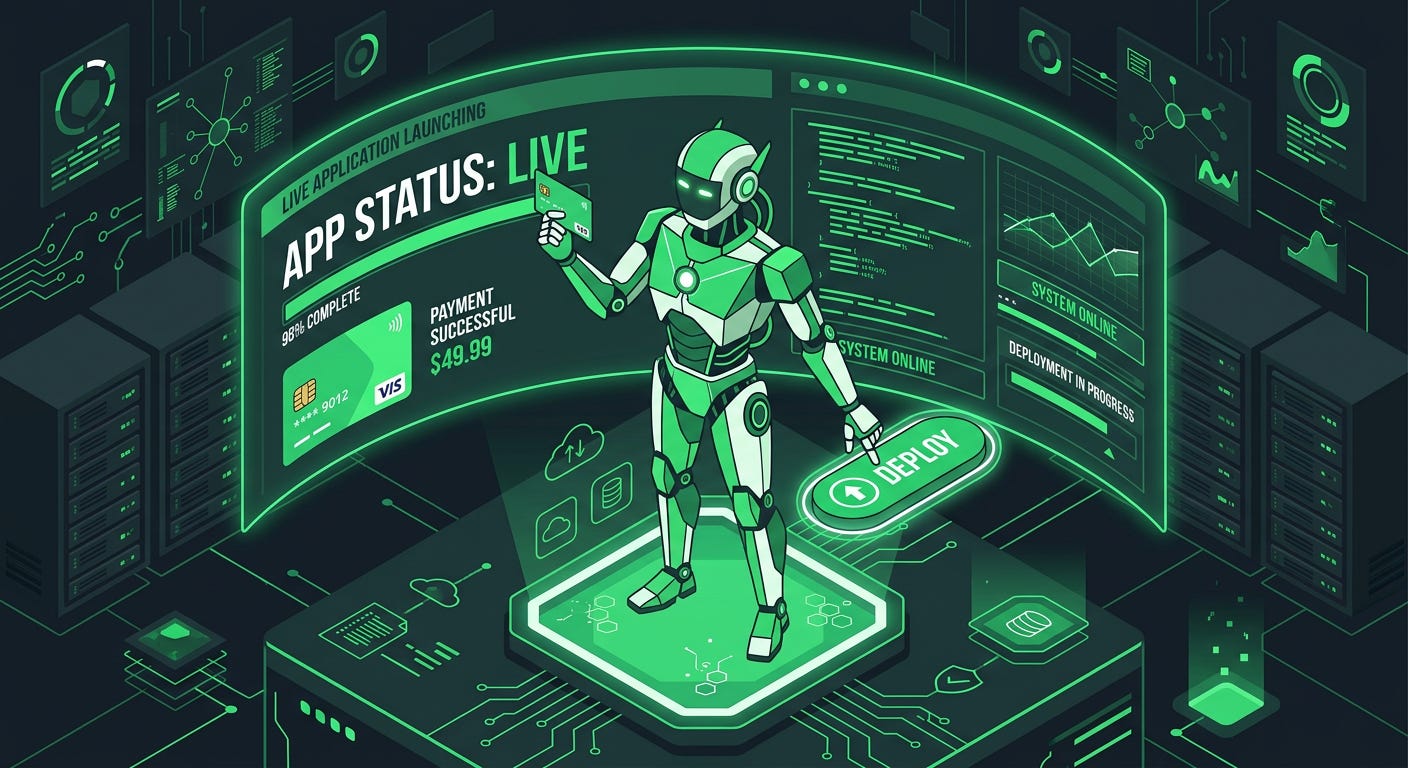

AI Agents Can Now Pay, Deploy, And Ship Without Asking Anyone

On April 30, Cloudflare published a protocol co-designed with Stripe that allows AI coding agents to provision cloud accounts, start paid subscriptions, register domain names, and deploy applications to production, without a human touching a dashboard or entering a credit card number at any point. Three steps: discovery, where the agent queries a service catalog; authorisation, where Stripe verifies the user’s identity and Cloudflare provisions an account automatically; and payment, where Stripe passes a tokenised credential so the agent can pay for services without ever handling raw card details. Stripe sets a default spending cap of $100 per month per provider. Nothing to a live app on a registered domain in just a few minutes.

It shipped on April 30 via the Stripe Projects CLI, currently in open beta, with Cloudflare as the first infrastructure partner. Additional partners already live include Vercel, Supabase, Hugging Face, Twilio, and AgentMail. The protocol is open. Any platform with signed-in users can play the role Stripe does, acting as the identity provider for any agent-accessible service. The security concerns are also there and in consideration. David Shipley of Beauceron Security pointed out that this also dramatically accelerates the ability of bad actors to spin up infrastructure, noting that cyber criminals already need to constantly create new accounts as security firms shut old ones down. Faster provisioning helps everyone. Including the people you don’t want it to help.

An agent that writes code, tests it, provisions the infrastructure, buys the domain, and ships the app is not a developer’s assistant. It is a system that builds and ships software. Who owns the subscriptions it opened? Who is liable for the spend? How do you revoke access across a dozen provisioned services when something goes wrong? Those questions don’t have clean answers yet. Enterprise teams will be working through them for the rest of 2026. But the pipeline itself, from idea to live product, is now fully automated and available to anyone with a Stripe account.

Why it matters

The last step that required a human in an autonomous coding workflow is gone. Not coming soon. Gone. That happened on April 30.

Musk’s Lawsuit Isn’t About The Money. It’s About Setting The Rules

The trial began on April 28 in the Northern District of California, Oakland. Elon Musk is suing OpenAI and Sam Altman for over $150 billion in damages, arguing that OpenAI defrauded him as a founding donor by abandoning the nonprofit mission under which it was established, then converting to a for-profit structure without honouring the commitments it made when it accepted his money. Altman was across the Bay Bridge at the AWS event in San Francisco, attending via recorded video. “My schedule got taken away from me today,” he said, which landed somewhere between diplomatic and pointed depending on who you asked.

What is not in dispute is that Musk donated approximately $45 million to OpenAI between 2015 and 2017, left the board in 2018, and OpenAI is now mid-conversion to a for-profit public benefit corporation. What is very much in dispute: whether Altman and the board made specific representations about the organisation’s permanent nonprofit status, whether those representations were legally enforceable, and whether the for-profit conversion constitutes fraud. OpenAI’s defence is that the mission was always to develop safe AI for humanity’s benefit, not to remain a nonprofit structure indefinitely, and that the conversion serves rather than betrays that mission.

The amendment Musk filed before trial changes everything about how to read this case. He is now asking that any damages be awarded to OpenAI’s charitable arm rather than to himself, and that Altman be removed from the nonprofit board. The personal financial gain angle is gone. What remains is a governance fight over whether the most prominent AI organisation in the world can be held to its founding commitments. OpenAI’s for-profit conversion is not yet complete. A ruling against them could halt it. A ruling for them sets a precedent that AI labs can walk away from their founding missions without legal liability to the donors who funded them. That precedent, not the $150 billion, is what every lab in the industry is watching from Oakland.

Why it matters

This case will decide whether AI organisations owe anything to the people who funded their early work. The answer becomes precedent for every lab currently navigating pressure to restructure. The outcome in Oakland matters far beyond OpenAI.

Salesforce Is Rebuilding Itself For AI Agents, Not Human Users

Salesforce announced Headless 360 at its TDX developer conference in San Francisco, describing it as the biggest architectural change in the company’s 27-year history. Everything on Salesforce, every CRM record, every workflow, every business logic rule, every approval process, is now accessible as an API, an MCP tool, or a CLI command. An AI agent can read a customer support queue, pull the case history, trigger the resolution workflow, update the record, and close the ticket without a human opening a browser. Co-founder Parker Harris put it from the stage: “Why should you ever log into Salesforce again?” Not a rhetorical question. An architectural statement.

The release ships more than 60 new MCP tools and 30 preconfigured coding skills, giving external coding agents, Claude Code, Cursor, Codex, and Windsurf complete live access to a customer’s entire Salesforce org. Agentforce Vibes 2.0, the company’s native development environment, now supports both the Anthropic agent SDK and the OpenAI agents SDK. The pricing model is shifting from per-seat to consumption-based, which is a quietly significant part. Per-seat pricing assumes a human for every seat. Consumption-based pricing assumes work is the unit, not the worker. Salesforce is betting the number of human seats is going in one direction, and building its revenue model around what comes after.

SaaStr ran a piece this week from a company that has been running this architecture for six months already, three humans and twenty-plus AI agents in production, and almost nobody logging into the Salesforce UI. “Going AI-first didn’t make CRM less important,” they wrote. “It made us finally use it the way it was always supposed to be used: as the data backbone, with intelligence running on top.” That is the product Salesforce is now selling. Not software for humans to click through. Infrastructure for agents to operate.

Why it matters

Salesforce has $30 billion in annual revenue built on the assumption that humans use software through graphical interfaces. Headless 360 is the company betting that assumption has an expiry date, and choosing to get ahead of it.

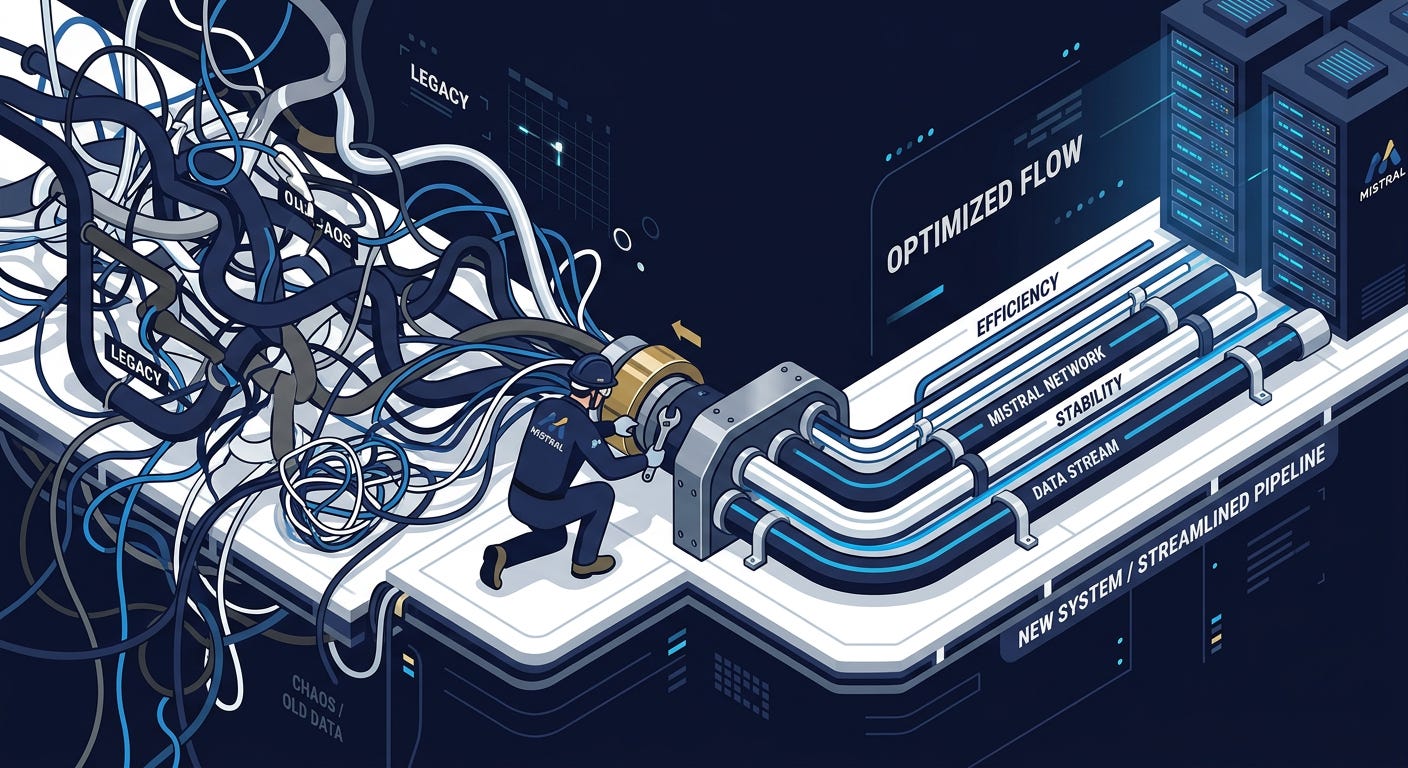

Mistral Isn’t Chasing Better Models. It’s Fixing Why AI Fails In Production

Mistral released Mistral Workflows this week, a production orchestration engine for multi-step AI pipelines. The target is enterprise teams that have built successful AI experiments and then hit a wall trying to put them into production. The product handles the parts that kill deployment: state management across multiple steps, tool call sequencing, error handling and retry logic, full observability into what the pipeline is doing at each stage, and privacy controls over which data each step can access. Not capabilities for making AI more impressive. Infrastructure for making it reliable enough to trust in production.

The observability piece is where teams consistently get stuck. They cannot see inside what the system is doing well enough to debug failures, satisfy audit requirements, or make a credible case to the legal and compliance teams that need to approve anything running in production. Mistral Workflows ships with built-in logging, step-by-step trace views, and integration with standard enterprise monitoring tooling. The privacy controls, defining what data each pipeline step can touch, are aimed at the same audience. These are features that go way beyond just benchmark numbers to actually getting deals signed.

Mistral has been building its enterprise business quietly while OpenAI and Anthropic take the headlines. Its models run cheaper than frontier alternatives. Its Paris headquarters gives it a compliance advantage for organisations under GDPR and EU AI Act obligations that its American competitors cannot replicate without a structural change. Workflows is not a play for the “which model is most capable” conversation. It is a play for the deployment layer underneath that conversation, the infrastructure that turns a capable model into something an enterprise actually runs. The strategy is to let the big labs fight over who has the best model, and own the layer that makes any model usable.

Why it matters

Most enterprise AI projects stall on deployment, rather than capability. Mistral shipped the tooling to fix that problem, with compliance features baked in from the start rather than added later. In regulated industries, that matters more than any other benchmark point.

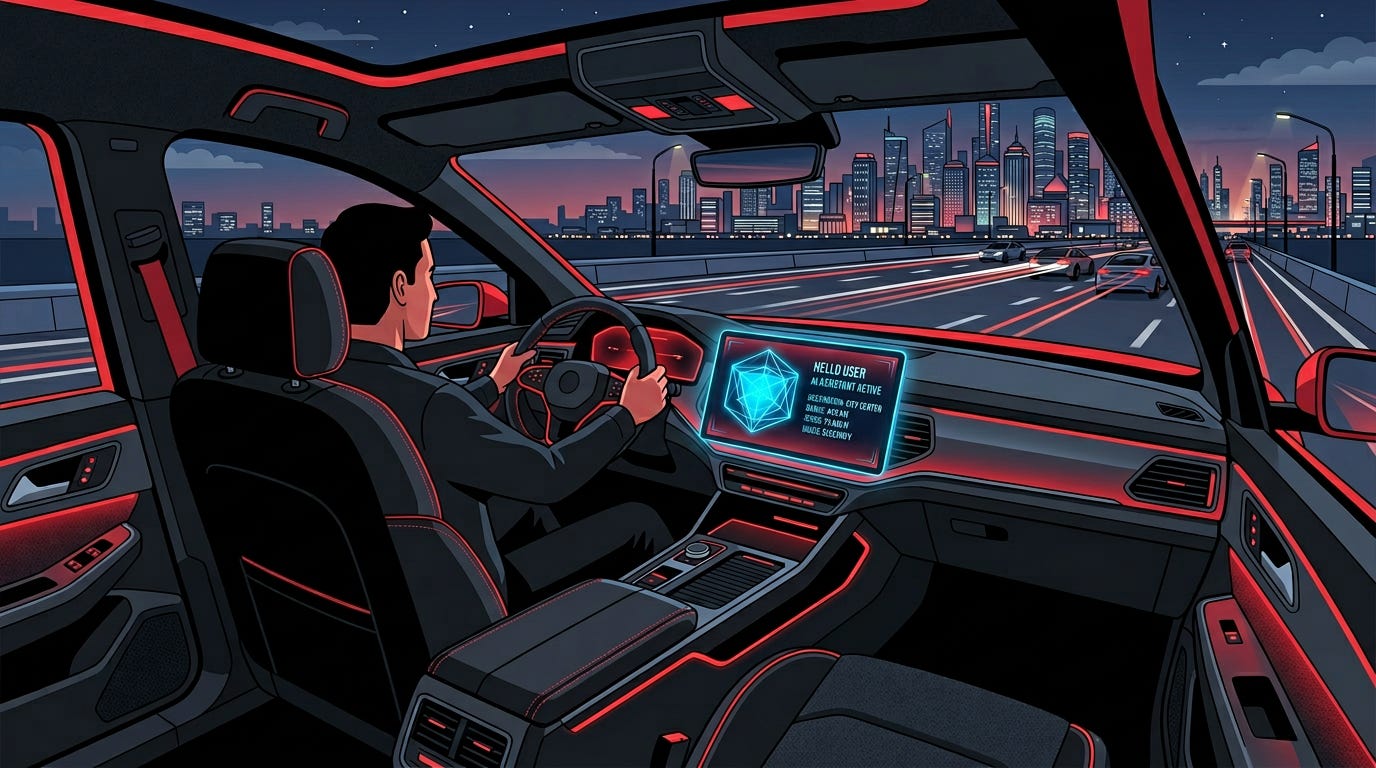

China Is Winning The In-Car AI Race. The West Is Far Behind

ByteDance confirmed this week that its Doubao AI assistant is now integrated into 145 electric vehicle models across China, making it one of the most widely deployed in-vehicle AI systems in the world by model count. The integration goes well beyond voice commands and navigation. Doubao handles real-time contextual awareness of the journey, personalised content recommendations during transit, natural language interaction with vehicle systems, and multi-turn conversations that persist across sessions. Alibaba’s Qwen and Baidu’s ERNIE are fighting for the same cockpit real estate. The Chinese EV market has become the most competitive deployment environment for conversational AI in any physical product category, anywhere in the world.

China’s EV market shifted from competing on range and charging speed to competing on the intelligence of the in-vehicle experience roughly 18 months ago. BYD, NIO, Li Auto, and Xpeng have all treated cockpit AI as a primary product differentiator. ByteDance’s advantage is not its model quality. It’s its data. Douyin has over 700 million daily active users. Doubao was the fastest AI app to reach 100 million users in China. The personalisation dataset that it generates gives it an edge that purpose-built automotive AI companies cannot replicate without years of consumer-facing deployment at scale. The 145-model figure is the advantage being converted into automotive partnerships.

Chinese EV manufacturers are expanding into Europe, Southeast Asia, and Latin America. The in-vehicle AI that dominates the Chinese market travels with those vehicles. Doubao, Qwen, and ERNIE are not household names in Western markets yet. In two years, they could be the AI that hundreds of thousands of drivers interact with for an hour every day, a higher-frequency touchpoint than most people have with any AI system. The battle for the cockpit is being fought almost entirely in China. Western AI labs are not seriously competing for it. They may want to reconsider that.

Why it matters

The car cockpit is the most overlooked AI deployment surface in the world. Chinese labs are winning it. As Chinese EVs go global, so does their AI. That’s a distribution story the Western AI industry hasn’t priced in yet and probably should.

And that wraps up this week. Tune in next Monday, same time, for another deep-dive into the stories shaping the AI world.

The Sentinel lands in your inbox every Monday so you can catch up with the fast-moving AI space while sipping your morning coffee. Every detail that matters, none that doesn’t.